The illustration above talks about classification in the animal universe; classifications is one of the best way to understand the universe. In our context, I have started in the previous posting to discuss types of functions that exist in Event Processing generic tools. Today I'll complete the picture by discussing classes of applications. This classification is not a partition, a certain application can have elements of multiple classes. This classification answers the question ---

The illustration above talks about classification in the animal universe; classifications is one of the best way to understand the universe. In our context, I have started in the previous posting to discuss types of functions that exist in Event Processing generic tools. Today I'll complete the picture by discussing classes of applications. This classification is not a partition, a certain application can have elements of multiple classes. This classification answers the question ---

what benefit the customer expect to obtain from an event processing system ?

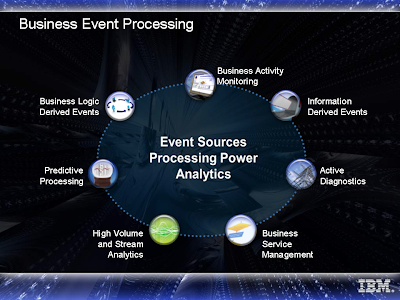

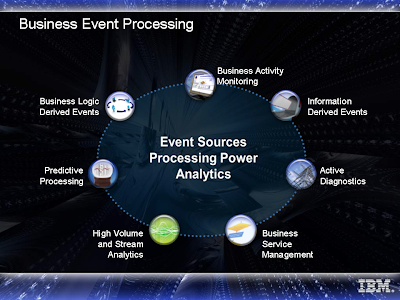

The illustration below is an IBM classification of what is "Business Event Processing", this is a slightly modified version of results of study we conducted within an IBM Academy of Technology study that analyzed some use cases. The use cases working group of EPTS is now repeating this exercise, three years later, and with probably somewhat broader perspective, so the end result may be different, but this will provide a sense of this type of classification:

Starting from the top and going anti-clockwise (I am left-handed...)

Starting from the top and going anti-clockwise (I am left-handed...)

- Business Activity Monitoring (BAM): Observation on collection of activities to find exceptions and monitor key performance indicators to alert business stakeholders. This typically requires aggregations and predefined pattern matching.

- Business logic derived events (sometimes called RTE - Real-Time Enterprise): detecting situations that require reaction (typically with some time constraints). The derivation of the situation may be either by predefined patterns (e.g. regulation enforcement) or by discovered patterns (fraud detection). Most of the applications use predefined patterns.

- Predictive Processing: Processing future predicted event in order to eliminate or mitigate them.

- Stream Analytics: Analysis of various streams (video, voice, data etc..) to derive individual events (e.g. from video stream) or trends - this includes "real-time business intelligence".

- Business Service Management: Monitoring satisfaction of Service Level Agreement (SLA) of IT systems.

- Active Diagnostics: Finding the root-cause problem by looking at collection of symptoms.

- Information Derived Events (also know as "information dissemination") -- personalized subscription that provide the right information at the right granularity to the right person at the right timing.

I'll dedicate (in the next few weeks) a separate posting to each of them with some examples, and reference back to functional and non-functional requirements.

It was a very busy week and alas I had to neglect the blogging hobby, now it is Friday night, I am watching a TV program with old Hebrew songs (my favorite), and decided it is a good time to blog a bit, however, our relatively new cat, who looks somewhat like this (this is not his picture, but of a similar cat I've found on the web) decided that I am a good place to rest on, and did not want to move, another creature who is trying to manage me... He is really a kitten that my daughters found and adopted, and as I have written before, giving names in our family is not an easy task, so he has several names and is known by "the cat". I call him Gilgamesh the terrible.

It was a very busy week and alas I had to neglect the blogging hobby, now it is Friday night, I am watching a TV program with old Hebrew songs (my favorite), and decided it is a good time to blog a bit, however, our relatively new cat, who looks somewhat like this (this is not his picture, but of a similar cat I've found on the web) decided that I am a good place to rest on, and did not want to move, another creature who is trying to manage me... He is really a kitten that my daughters found and adopted, and as I have written before, giving names in our family is not an easy task, so he has several names and is known by "the cat". I call him Gilgamesh the terrible.

In 2007 we had the first Dagstuhl seminar on event processing, and we the same set of organizers (Mani Chandy, Rainer von Ammon and myself) decided to apply again for a second Dagsthul seminar in 2010, and the seminar has passed the committee, with some clarifications that we need to provide about scope. I'll let you know if and when it will be finally approved.

The intention of this Dagstuhl seminar (that lasts for 4.5 days) is to have an opportunity for a selected group of people to have a meeting in an isolated place to have in-depth discussions. The proposed goal of this Dagstuhl Seminar is to work on "event processing manifesto". There has been several manifestos of different area in the past, for example: OODB manifesto, Hopefully, by the time of the Dagstuhl seminar we'll have advanced work done by the various EPTS working groups that are being launched this month, and we'll be able to utilize their results in order to better define what "event processing" is -- note that I don't use "complex event processing", and I explained the reasons before.

One of the questions asked is what is the scope of "event processing", since working with events is quite wide area - starting from interrupt handling in operating systems, moving through graphical programming and more -- much of this is related to programming with events in conventional programming, and there are even books dealing with this area. However, our scope is more modest: generic tools for processing events in IT systems. This scope talks on what is needed to build a generic tool, and not ad-hoc programming hard-coded for every single application, and IT systems and not operating system, embedded systems etc..

One of the questions asked is what is the scope of "event processing", since working with events is quite wide area - starting from interrupt handling in operating systems, moving through graphical programming and more -- much of this is related to programming with events in conventional programming, and there are even books dealing with this area. However, our scope is more modest: generic tools for processing events in IT systems. This scope talks on what is needed to build a generic tool, and not ad-hoc programming hard-coded for every single application, and IT systems and not operating system, embedded systems etc..

The illustration above is a first step in thinking about -- what event processing system should include -- parts of it should be mandatory and some optional, however from functionality point of view there are:

- Routing and filtering -- the most basic form of event processing.

- Mediation -- transformation, enrichment, aggregation, split -- the next level of sophistication.

- Pattern Matching --- (I called it in the past "pattern detection") which may involve multiple events from multiple types.

On the bottom of the illustration there are two other entities:

Event processing platforms which are enablers for scalability, distribution and other good qualities. Event processing platforms may have their own functions or host others (or both)...

Pattern discovery that falls under the category "Intelligent Event Processing". It can be done off-line (typically this is the case) or on-line - and then the pattern matching may be unified with the discovery.

In different types of applications we may need different subsets, for example: fraud detection requires pattern discovery, security type detections (e.g. denial-of-service attack or intrusion) may use on-line pattern detection. On the other hand, other applications don't require pattern discovery at all, for example: compliance with regulations, where the regulations are given and cannot be discovered, or BAM systems in which the Key Performance Indicatros are determined according to the corportate strategy and cannot be discovered. Furthermore, there are applications in which pattern matching is not required at all, and all processing is of type filtering, routing, enrichment and aggregation.

And I'll finish with a footnote to David Luckham's recent article. David is trying to answer "critisizm on the Blogsphere" about CEP as a marketing hype, and lack of value from the current set of products. First, I never thought that there is over-hype, on the contrary, relative to the potential of event processing there is under-hype. I am re-posting this illustration taken from Brenda Michelson panel presentation in the last EPTS annual symposium.

The hype is relatively low, and in contrast, the analysts report are all indicating that the EP market has grown by 50% or so in 2008, and IDC even claims that for a second year in a raw that is the fastest growing middleware type. About the Blogsphere crtisizm, as I already written before, much of it stems from diferent interpretations of the term "complex event processing", for example, some of the postings of Tim Bass lead me the conlusion that he believes in the equation : complex event processing = on-line pattern discovery. Again, eliminating the quantification "complex", there is a large set of applications (probably most of the applications I know) of event procssing, do not require stochastic reasoning at all.I'll post a continuation Blogs about application types, and functions they require.. It is very late - going to sleep.

A comment made by Hans Glide to one of my previous postings on this Blog, prompted me to dedicate today's posting to Off-Line Event Processing. Well - as a person who is constantly off any line, I feel at home here...

A comment made by Hans Glide to one of my previous postings on this Blog, prompted me to dedicate today's posting to Off-Line Event Processing. Well - as a person who is constantly off any line, I feel at home here...

Anyway -- some people may wonder and think that the title above is an Oxymoron, since they put "real-time" as part of the definition of event processing. I have used before this picture that is the best describing some of what is written about event processing - by everybody:

This, of course, illustrates a collection of blind people touching an elephant; each of them will describe the elephant quite differently, and the phenomenon that people say "event processing is only X", where X defines a subset of the area is quite common. In our case X = "on line".

This, of course, illustrates a collection of blind people touching an elephant; each of them will describe the elephant quite differently, and the phenomenon that people say "event processing is only X", where X defines a subset of the area is quite common. In our case X = "on line".

The best here is to tell you about a concrete example of a customer's application I am somewhat familiar with. The customer is a pharmaceutical company which monitors its suppliers related activities. It looks at events related to supplier-related activities and checks them against its internal regulations. The amount of such events are several thousands per day and from business point of view, it does not require real-time requirements, the observation about any regulation violation and action taken, can be done in the next day. The way that this system works is accumulate events during the day, and activate the vent processing system at the end of each day, which is actually a batch processing done off-line.

An interesting question is why have this customer chosen to use an event processing system, and did not use a more traditional approach of putting everything in a database and using SQL queries. The answer is quite simple: This applications have some interesting properties:

- The number of regulations is relatively high (in the higher range of three digits);

- Many of the regulations rules are indeed detection of temporal oriented patterns that include multiple events,

- Regulations are inserted or modified frequently.

Given all these it turned out that the use of event processing system in off-line was the most cost-effective solution; While using SQL is nominally possible, writing these regulations in SQL is not easy, and the magnitude makes the investment in development and maintenance quite high.

So - the benefit of using event processing here is neither the real-time aspect, nor high throughput support, but simple TCO considerations.

This is not the only applications of this type, and in fact, I have seen several other cases in which event processing has been used off-line. There is also another branch of off-line processing which combine on-line and off-line together, but I'll write about it in another posting...

More - Later.

Starting from the top and going anti-clockwise (I am left-handed...)

Starting from the top and going anti-clockwise (I am left-handed...)